Find The Best Time To Send Email For B2B Success

The most popular answer to the best time to send email is still some version of “Tuesday at 10 AM.”

That advice is too neat to be useful.

In B2B, send time isn't a universal rule. It's a moving target shaped by who you're emailing, what you want them to do, what kind of message you're sending, and when that audience has the bandwidth to engage. A consultant emailing enterprise sales leaders isn't playing the same game as a product-led growth team sending a feature roundup to marketers, or a newsletter operator pushing a webinar registration.

Benchmarks still matter. They give you a starting point. But if you stop at generic advice, you end up optimizing for someone else's audience instead of your own.

Why Generic Send Time Advice Fails B2B Marketers

The idea that there's one best time to send email falls apart as soon as you look at audience differences.

Even broad industry commentary points in that direction. Emma notes that industry-specific timing contradicts one-size-fits-all recommendations, and that a cross-industry benchmark that cleanly compares segments still isn't available. That's a bigger problem than it sounds. If no shared benchmark can reliably tell you whether a SaaS founder behaves like a consultant, a creator, or a growth marketer, then “best time” advice becomes a rough guess.

That's why generic timing advice often produces mediocre results. It bundles together very different inbox behaviors and pretends they belong to the same decision model.

Different B2B audiences behave differently

A B2B email can target people with very different work rhythms:

- Enterprise sales leaders often triage inboxes around meetings and pipeline reviews.

- PLG teams may engage when they have room to think, not just when they first open email.

- Consultants and fractional CMOs move between client work, travel, and async review blocks.

- Newsletter creators may care more about click intent than immediate open behavior.

Those differences matter because send time isn't just about visibility. It's about context.

An email sent during a crowded work block might get opened and ignored. The same email sent later, when the reader has time to click, reply, or book time, can perform better even if it doesn't win on opens.

Generic send-time advice usually answers the wrong question. It asks when people check email, not when your audience is ready to act.

The benchmark is a starting point, not a decision

A lot of marketers look for a shortcut because timing feels operational. Pick a day, pick an hour, schedule, move on.

That habit is understandable, but it's expensive. If your timing is wrong, every subject line test, every CTA change, and every creative iteration gets judged under bad conditions. You don't learn what content works. You learn what content survived a poor send window.

Specialized resources can still offer assistance. If you're focused on outreach rather than newsletters, EmailScout's guide to the best time to send cold emails is useful because it frames timing as context-dependent instead of universal.

The practical shift is simple. Stop asking for the best time to send email as if it were a fixed answer. Start asking which send window is most likely to produce the outcome you care about from the specific audience you want.

That's a much better question. It also leads to a process you can repeat.

Deconstructing Common Industry Send Time Benchmarks

Benchmarks deserve respect. They just don't deserve blind trust.

If you're starting from scratch, you need a default window to test first. For B2B newsletters, that's still midweek, during work hours. According to Salesforce's summary of Bloomreach's 2025 analysis, Tuesday and Thursday are the strongest send days, with peak engagement between 10 AM and 3 PM recipient local time, and open rates can reach up to 49.1% on high-engagement days.

That doesn't mean Tuesday at 10 AM is your answer. It means it's a credible baseline.

Why these windows became the default

Midweek, mid-morning works for familiar reasons.

People are usually past Monday catch-up mode. They're not yet mentally checked out for Friday. Their inbox is active, but the workday hasn't become fragmented enough to bury every non-urgent message. If your newsletter is educational, operational, or tied to work decisions, that environment helps.

The logic looks like this:

| Timing pattern | Why marketers use it | Where it tends to fit |

|---|---|---|

| Tuesday morning | Stable work rhythm, fewer post-weekend disruptions | B2B newsletters, product updates, educational sends |

| Thursday late morning | Readers are still in work mode but closer to action | Demos, webinars, offer emails |

| Early afternoon | Good for messages that don't require immediate reply | Thought leadership, digest formats |

These patterns are popular because they align with work routines, not because they reveal a universal law.

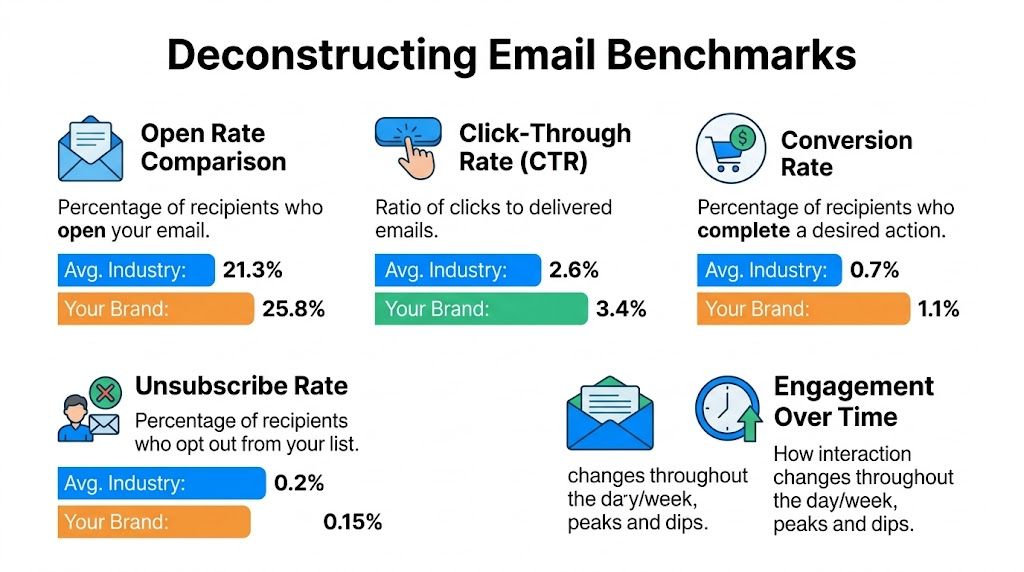

What benchmarks get right, and what they hide

Benchmarks are helpful when you need to narrow the field. They save you from random testing. They also help teams align around a reasonable default instead of debating every campaign from scratch.

Where they fail is in flattening the difference between opens and outcomes.

A send time can look strong because it drives visibility. That same send time can underperform on clicks, replies, booked meetings, or downstream pipeline. If your team only measures opens, you'll often crown the wrong winner.

Practical rule: Use benchmarks to choose your first test window, not your permanent schedule.

There's another issue. Benchmarks often treat “B2B” as one audience. It isn't. A cybersecurity buyer, a marketing operator, and a fractional consultant don't behave the same way. Their workday structure changes how they process newsletters.

That's why the right move after reviewing benchmarks isn't to commit. It's to test.

A more useful way to read benchmark data

Use benchmark data like a strategist, not like a scheduler.

Ask three questions:

- Is this benchmark based on a similar email type? A cold outbound message and a recurring newsletter don't compete for attention the same way.

- Is the KPI aligned with my goal? An open-rate winner may not be a reply-rate winner.

- Does the benchmark assume recipient local time? If it doesn't, the advice is incomplete.

If you want a deeper view on day-of-week trade-offs before you design your first experiment, this breakdown of the best day to send email is a useful companion.

The best time to send email usually starts with a benchmark. Mature teams just know better than to end there.

How to Form Your Initial Send Time Hypothesis

Most send-time tests fail before the first email goes out.

The problem isn't the platform. It's weak thinking upstream. Teams pick two random hours, split the list, and hope the data reveals something meaningful. Usually it doesn't. A useful test starts with a hypothesis grounded in audience behavior.

For enterprise sales and consultant audiences, there is at least one strong directional clue. EmailOpShop's summary of HubSpot and Yesware points to 10 to 11 AM on Tuesday and Thursday in recipient local time, with Gong.io identifying 11 AM as the highest-reply point in B2B contexts.

That gives you a starting point. It doesn't replace judgment.

Build the hypothesis from audience, content, and goal

A good send-time hypothesis connects three things that belong together.

First, audience. What does this person’s day look like? Enterprise account executives may spend early morning blocks reviewing priorities and entering meetings. Consultants may use late morning for async catch-up after client work starts. Growth marketers may check newsletters in the morning but click later when they have room to explore.

Second, content. Not every email asks for the same kind of attention. A plain-text insight with a direct reply ask behaves differently from a resource roundup with multiple links. A webinar invitation behaves differently from a strategic memo.

Third, goal. Are you optimizing for opens, clicks, replies, registrations, or subscriber growth? The wrong KPI produces the wrong hypothesis.

Here are three examples:

Reply-led outreach to consultants

Hypothesis: send at 11 AM local time on Thursday because the audience is likely past initial inbox triage and more willing to respond to a focused ask.Educational newsletter for growth teams

Hypothesis: send Tuesday late morning because the message fits a work-mode reading block and doesn't require immediate conversion.Action-oriented roundup with a webinar CTA

Hypothesis: test a later window against your daytime control because readers may save click behavior for lower-pressure hours.

Write the hypothesis so it can fail

Vague hypotheses create vague learning.

Don't say, “Tuesdays seem good for B2B.” That's not a testable idea. Say, “For enterprise sales leaders on our list, a Thursday 11 AM local-time send will outperform Tuesday 2 PM on replies because it lands after morning triage but before afternoon meeting load.”

That version does two useful things. It predicts a result, and it explains why.

A strong hypothesis isn't a guess. It's a small model of buyer behavior.

Keep your first comparison tight

A lot of teams make the opening experiment too broad. They compare Monday evening to Thursday morning, change the subject line, alter the CTA, and then wonder why the result is impossible to interpret.

Start with a narrow contrast:

| Variable | Keep fixed | Change |

|---|---|---|

| Audience | Same segment | No change |

| Creative | Same subject line, same body, same CTA | No change |

| Offer | Same value proposition | No change |

| Time | One controlled comparison | Yes |

That discipline matters because timing is already noisy. If you also change message quality, you're not testing the best time to send email. You're testing several things at once and learning very little.

A first hypothesis doesn't need to be perfect. It just needs to be plausible, specific, and linked to a business outcome your team cares about.

Designing a Statistically Sound Send Time Test

Many organizations don't need more creativity in send-time testing. They need more control.

A proper test isolates timing from everything else. Same audience type. Same content. Same subject line. Same CTA. The only thing that changes is the delivery window. If you don't hold those variables constant, the result is storytelling, not evidence.

A strong opening benchmark for B2B cold outreach exists. Siege Media's 2025 analysis of more than 85,000 personalized cold emails found that Monday between 6 and 9 AM PST produced the strongest engagement, with an open rate exceeding 20% and a reply rate of 2.8%. That's useful because it gives you a credible control window for outreach-style sends.

Start with one KPI hierarchy

Before you launch anything, decide what winning means.

Teams often get sloppy. They say they're testing send time, but they haven't agreed whether success is visibility, engagement, or conversion. Then one group points to opens, another to clicks, and sales asks which variant produced replies.

Use a simple hierarchy:

Primary KPI

Pick one metric tied to campaign purpose. For outreach, that might be reply rate. For a newsletter with a resource CTA, it might be clicks.Secondary KPI

Track a supporting signal. Opens can help explain behavior, but they shouldn't automatically decide the winner.Guardrail metric

Watch for negative signals like unsubscribes or weak downstream quality.

If your team needs a framework for structuring these experiments cleanly, this guide to A/B testing email campaigns for higher engagement and ROI is a practical reference.

Segment the list before you split it

Send-time tests break when the cohorts aren't comparable.

If one group is mostly U.S. managers and the other is mixed geography with senior executives, the timing result gets contaminated fast. The audience composition changed, so the comparison stopped being fair.

Build cohorts with matching characteristics:

- Role similarity keeps work rhythms closer.

- Geography matters because local-time delivery changes inbox position.

- Lifecycle stage matters because newer subscribers often behave differently from long-time readers.

- Acquisition source matters because intent varies between organic signups, paid acquisition, and imported CRM contacts.

This is also where using a platform with local-time scheduling, audience filters, and clean reporting changes the quality of your test. A system such as Breaker can handle recipient-timezone delivery, targeting by ICP, and campaign analytics in one workflow, which reduces the operational errors that usually distort timing tests.

Time zone handling is not optional

A “10 AM send” isn't a real send strategy if half your list receives it at lunch and the other half gets it before sunrise.

Local-time scheduling is one of the biggest dividing lines between trustworthy and misleading test results. If you want to compare two windows, recipients need to receive those windows in their own workday context.

A clean setup looks like this:

| Segment | Variant A | Variant B |

|---|---|---|

| US East | 10:30 AM local | 2:00 PM local |

| US West | 10:30 AM local | 2:00 PM local |

| UK | 10:30 AM local | 2:00 PM local |

Without that normalization, you're not testing behavior. You're testing calendar accidents.

Keep the experiment boring

The cleaner the test, the less exciting it feels to set up. That's a good sign.

Use the same subject line. Keep the email body unchanged. Don't refresh the design halfway through the test. Don't let sales manually follow up faster on one cohort than the other if reply speed affects conversion.

Later in the process, a short walkthrough can help teams standardize execution:

Let the test run long enough to capture real behavior

The temptation is to call a winner too early.

Resist that. Many B2B emails get their most meaningful engagement after the first open, not at the moment of delivery. Someone may see the message in the morning and click in the afternoon. Another may open it at work and reply later when they have a clearer block.

If you decide too early, the inbox wins. You end up measuring attention, not intent.

A useful send-time test is disciplined, local-time aware, and aligned to one business goal. That's what makes the result portable to the next campaign.

Analyzing Test Results to Find Your Winner

The hardest part of send-time optimization isn't running the test. It's refusing to overreact to surface metrics.

Many teams still crown the winner by open rate because it's visible first and easy to compare. That's a mistake. Opens tell you whether the email got attention. They don't tell you whether it produced the behavior you wanted.

Read metrics in the order of business value

If the email's job is to generate action, then clicks, replies, and downstream conversion deserve more weight than opens.

A practical review order looks like this:

Primary outcome first

If you tested for replies, start there. If you tested for webinar registrations, start with clicks that led to that path.Support metric second

Look at opens to understand why a variant may have underperformed or overperformed.Quality signal third

Review unsubscribe behavior, spam complaints, or poor-fit conversions to make sure the winner isn't noisy traffic.

This matters most in executive and high-intent audiences. If you're benchmarking expectations for reply behavior, Salesmotion's guide to Cold Email Executives Reply Rates is a useful reality check on what “good” looks like in senior inboxes.

Not every apparent winner is a real winner

A small lift can be meaningful. It can also be random noise.

You don't need a data science team to handle this well. You just need restraint. If two variants are close and neither clearly improves your primary KPI, don't force a winner. Log the result as inconclusive and run the next test with a tighter audience or a wider time gap.

Here are the common result patterns:

| Result pattern | What it usually means | What to do next |

|---|---|---|

| Higher opens, weaker clicks | Better visibility, worse action timing | Keep testing later or lower-pressure windows |

| Lower opens, stronger replies | Fewer people saw it, better people responded | Prioritize the reply winner if pipeline matters |

| No meaningful separation | Time may not be the limiting factor | Test content, audience segment, or a more distinct time contrast |

| One clear winner across primary and secondary metrics | Timing effect is likely strong | Roll forward and validate again |

Watch for hidden distortions

A result can look decisive even when the setup was flawed.

Maybe one cohort had more recent signups. Maybe one segment had stronger historical engagement. Maybe one variant coincided with a product launch, holiday period, or sales push that changed inbox behavior. That's why analysis needs context, not just a dashboard screenshot.

A useful habit is to review every result with a short diagnostic list:

- Did both cohorts have similar audience composition?

- Was the same content sent without edits?

- Did local-time scheduling work correctly?

- Did one segment receive extra follow-up or sales attention?

- Was the winning metric the one we agreed on before launch?

A send-time test only earns trust when the setup and the interpretation are both controlled.

Turn a result into a scheduling rule

The point of analysis isn't to admire the numbers. It's to create an operating rule your team can use.

For example:

- Educational newsletter for growth marketers: default to Tuesday late morning local time

- Reply-driven outreach to consultants: default to Thursday around late morning local time

- CTA-heavy event promotion: test a later action window against your daytime control

That rule should stay narrow. It belongs to a segment and a message type, not to your entire email program.

If you want a practical example of how teams operationalize that kind of learning, this send time optimization B2B case study shows what a more disciplined feedback loop looks like.

The best time to send email becomes useful only when it graduates from a one-off test result into a repeatable scheduling rule tied to a specific audience and objective.

Advanced Timing Strategies and Common Pitfalls

Once you've found a likely winner, the work changes. You're no longer hunting for any decent send time. You're refining timing by segment, intent, and message type.

In such scenarios, static advice gets weakest. The winning slot for a newsletter issue often isn't the winning slot for a webinar push, a direct reply ask, or a promotional email with a sharper CTA.

Test non-obvious windows on purpose

A lot of B2B teams never test evenings because the old playbook says business email belongs in business hours.

That leaves opportunity on the table. Customer.io's 2025 data shows evening sends can reach click-through rates up to 9.01% and open rates of 59% around 8 to 9 PM, driven by lower inbox competition. That doesn't mean you should move your whole calendar to evenings. It does mean you should stop assuming that daytime visibility and action timing are the same thing.

Evening tests make the most sense when the email asks for deliberate action:

- Webinar signups

- Resource downloads

- Newsletter issues with one strong CTA

- Promotional sends that need a click, not a reply

Segment timing by role and message

One timing rule for the whole database is usually too blunt.

Executives, managers, practitioners, and consultants often process email differently. The same goes for different content classes. A thought-leadership note can win in a work-mode block. A sponsor-driven or click-heavy send may do better when the inbox is quieter.

A mature timing calendar usually separates at least these categories:

| Segment or email type | Likely testing direction |

|---|---|

| Senior B2B decision-makers | Morning and late-morning reply windows |

| Operators and marketers | Midweek workday reading blocks |

| CTA-heavy newsletter sends | Evening click windows |

| Promotional campaigns | Compare standard work hours against lower-competition periods |

Avoid the mistakes that make tests useless

Most send-time programs don't fail because timing doesn't matter. They fail because the testing discipline collapses.

The repeat offenders are familiar:

Testing too many variables at once

If you change day, hour, subject line, and CTA together, you won't know what caused the result.Declaring victory after one win

A single strong campaign can reflect topical relevance, not timing strength. Validate the result.Using one universal default forever

Audiences shift. Content mix changes. Seasonality changes. Timing rules need periodic rechecks.Ignoring list quality

If the audience is a poor fit or inactive, no send-time adjustment will rescue the campaign.

The teams that get timing right don't treat it as a one-time optimization. They treat it like ongoing audience research.

The best time to send email isn't fixed. It evolves with the list, the message, and the action you're trying to drive.

Turn Your Newsletter into a Growth Engine

The best time to send email isn't a magic hour. It's a repeatable operating process.

Strong teams start with benchmarks, but they don't confuse benchmarks with truth. They form a hypothesis based on audience, content, and goal. They test one timing variable at a time. They analyze results using business metrics, not vanity metrics. Then they turn the winner into a narrow rule for a specific segment and message type.

That's how send-time optimization becomes useful. It stops being trivia and starts becoming an advantage.

For B2B marketers, that impact matters because timing affects more than opens. It affects when your message gets noticed, when a buyer has room to click, and when a prospect is willing to reply. Done well, timing improves the efficiency of every campaign you already send.

The teams that win with newsletters don't ask for a universal answer. They build a system that keeps producing better answers over time.

If you want a platform that combines newsletter sending, ICP-based audience targeting, local-time scheduling, deliverability support, and real-time analytics in one workflow, take a look at Breaker. It helps B2B teams test, learn, and improve send timing without stitching together multiple tools.